AI Act 2026: Obligation Calendar, Digital Omnibus, and Compliance Guide

The European AI Act has been progressively coming into force since February 2025. After the first obligations (prohibited practices, AI literacy), the calendar intensifies in 2026 with requirements for high-risk systems. How do you prepare? Here's the updated guide including the "Digital Omnibus" from November 2025.

Key takeaways: February 2025 marked the enforcement of prohibited AI practices and mandatory AI literacy training across the EU. August 2026 brings obligations for high-risk AI systems including recruitment, credit scoring, and public services. The Digital Omnibus published in November 2025 introduces simplifications for SMEs, proportionate conformity assessments, and priority sandbox access, though critics warn of potential loopholes. Fines reach up to 35 million euros or 7% of global revenue. Organizations should prioritize a 10-step compliance checklist starting with AI inventory, risk classification, and literacy programs. Ikasia, a Paris-based AI consultancy, recommends anticipating compliance from the design phase rather than retrofitting. Legacy systems may qualify for postponement to August 2027 under documented compliance plans. National authorities, likely the CNIL in France, will supervise enforcement alongside the European AI Office.

Recap: What Has Changed Since the Initial AI Act

The AI Act in Brief

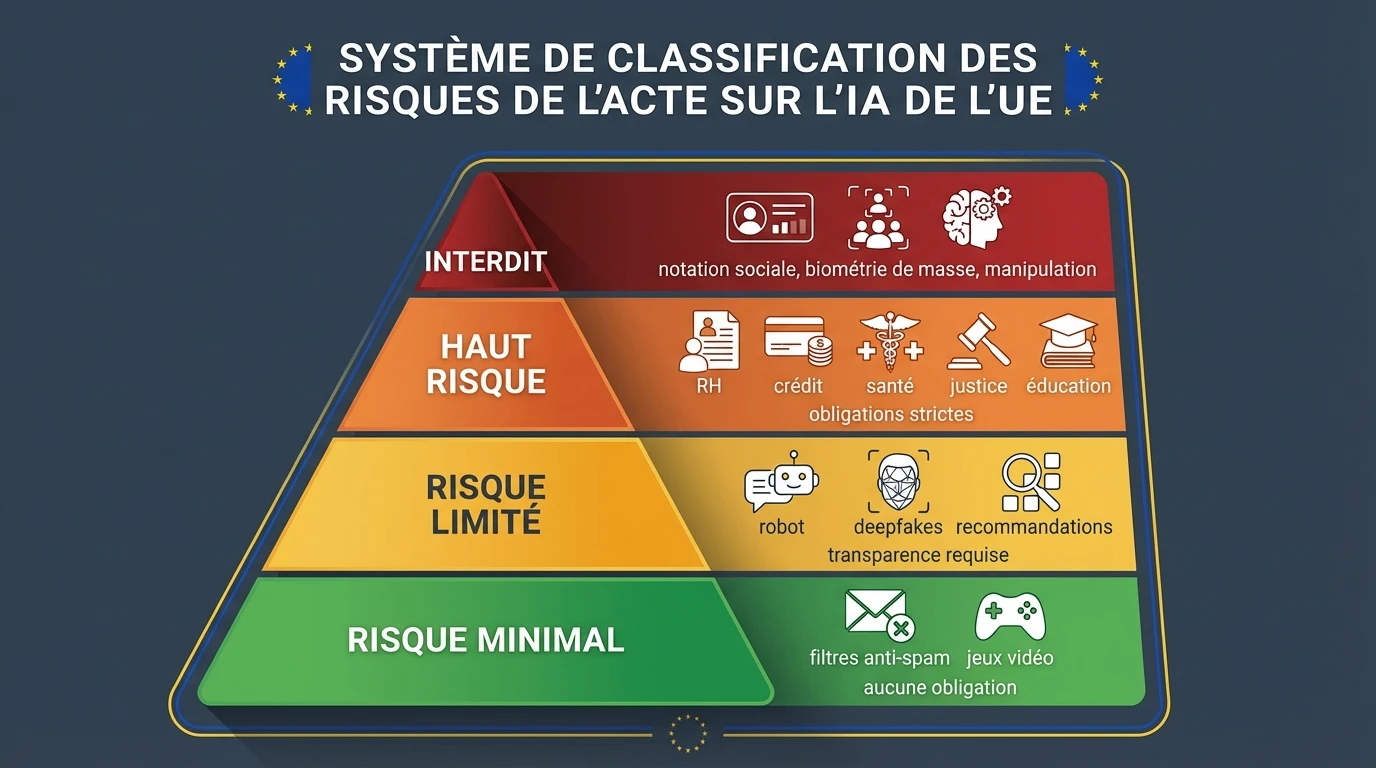

Adopted in March 2024, the AI Act (EU Regulation 2024/1689) is the world's first comprehensive AI regulation. It classifies AI systems into 4 risk levels:

Modifications Since Adoption

Delegated acts published (2025):

- Clarifications on high-risk systems

- Definition of prohibited practices

- Conformity assessment procedures

Digital Omnibus on AI (November 2025):

- Simplifications for SMEs

- Clarifications on regulatory sandboxes

- Articulation with sectoral regulations

February 2025: Prohibited Practices and AI Literacy

The 8 Prohibited AI Practices

Since February 2, 2025, the following AI systems are totally prohibited in the EU:

1. Subliminal Manipulation Systems using subliminal techniques to alter a person's behavior in a harmful way.

2. Exploitation of Vulnerabilities Targeting vulnerable people (age, disability, social situation) to influence their behavior.

3. Social Scoring by Authorities Evaluating people's reliability based on their social behavior or personal characteristics.

4. Predictive Crime Risk Predictive profiling based solely on profiling without observed criminal behavior.

5. Mass Facial Scraping Building facial recognition databases by scraping images from the Internet.

6. Emotion Recognition at Work/School Inferring emotions in the workplace or educational institutions (except for medical/safety reasons).

7. Sensitive Biometric Categorization Classifying people by race, political, religious, or sexual orientation via biometric data.

8. Real-time Biometric Identification Real-time facial recognition in public spaces (except strict exceptions: searching for victims, terrorist threats).

The AI Literacy Obligation

Since February 2025, any organization deploying AI systems must ensure that its staff has a sufficient level of AI literacy.

Concretely:

- Training for AI system users

- Understanding of capabilities and limitations

- Awareness of risks and biases

- Documentation of training provided

Who is concerned:

- AI system providers

- Deployers (organizations using AI)

- Importers and distributors

August 2025: Obligations for GPAIs

What is a GPAI?

General-Purpose AI Models are models trained on large amounts of data, capable of accomplishing many tasks.

Examples: GPT-4, Claude, Gemini, Llama, Mistral.

Obligations Since August 2025

For all GPAIs:

- Detailed technical documentation

- Copyright compliance policy

- Training data summary

For GPAIs with systemic risk: (Threshold: 10^25 FLOPs of training)

- Systemic risk assessment and mitigation

- Adversarial testing (red teaming)

- Serious incident reporting

- Adequate cybersecurity protection

Impact for Companies

If you use commercial LLMs (OpenAI, Anthropic, Google):

- The provider is responsible for model compliance

- BUT you remain responsible for your usage

If you develop/fine-tune models:

- You potentially become a GPAI provider

- Documentation obligations apply

Digital Omnibus on AI: Simplification or Complication?

Omnibus Context

In November 2025, the European Commission published the Digital Omnibus on AI, a set of corrective measures following initial implementation feedback.

Main Modifications

1. Relief for SMEs

| Obligation | Before Omnibus | After Omnibus |

|---|---|---|

| Technical documentation | Complete | Simplified for SMEs |

| Conformity assessment | Systematic | Proportionate to actual risk |

| Regulatory sandboxes | Limited access | Priority access for SMEs |

2. High-Risk Clarifications

The Omnibus specifies high-risk exclusion criteria:

- Support systems without decision influence

- AI for narrow procedural tasks

- AI improving a process without substantially modifying it

3. Sectoral Harmonization

Clarified articulation with:

- MDR (medical devices)

- Construction products regulation

- Machinery directive

- Autonomous vehicles regulation

Criticism: Simplification or Retreat?

Positive arguments:

- Reduced burden for innovative SMEs

- Gray zone clarification

- Sandbox acceleration

Critical arguments:

- Risk of circumvention via "support" qualification

- Weakening of guarantees for certain systems

- Industrial lobbying diluting requirements

Complete AI Act Obligations Calendar

Summary Table

| Date | Obligation | Affected |

|---|---|---|

| February 2025 | Prohibited practices | Everyone |

| February 2025 | AI literacy | Providers + Deployers |

| August 2025 | GPAI obligations | Model providers |

| August 2026 | High-risk obligations (non-products) | Providers + Deployers |

| August 2027 | High-risk obligations (products) | Medical devices, machinery, etc. |

| 2027-2028 | Possible postponement (Digital Omnibus) | Certain high-risk systems |

Focus on August 2026: High-Risk

Affected systems:

- Recruitment and HR management AI

- Educational assessment AI

- Credit scoring AI (excluding fraud detection)

- Access to essential public services AI

- Judicial decision support AI

Obligations to meet:

- Risk management system

- Data governance (quality, representativeness)

- Detailed technical documentation

- Automatic logging (logs)

- Transparency and user information

- Effective human oversight

- Accuracy, robustness, cybersecurity

- Conformity assessment (self or third-party)

Possible Postponements: High-Risk Toward 2027-2028

Postponement Mechanisms

The Digital Omnibus provides for potential postponements for:

1. Legacy systems (existing before August 2025) Postponement until August 2027 if:

- System already in production

- Documented compliance plan

- No substantial modification

2. Sectorally regulated products Postponement until August 2027-2028 for:

- Medical devices (MDR alignment)

- Vehicles and machinery

- Construction products

3. Small providers Additional delays for:

- Companies < 50 employees

- Revenue < €10M

- Subject to compliance plan

Warning: Postponements Are Not Automatic

To benefit from a postponement, you must:

- Document your situation

- Demonstrate compliance efforts

- Have no major non-compliances

2026 Compliance Checklist: 10 Priority Actions

Immediate (Q1 2026)

-

1. AI Systems Inventory Map all AI systems used or developed.

-

2. Risk Classification Evaluate each system according to AI Act categories.

-

3. Prohibited Practices Check Ensure no system falls into the 8 prohibitions.

-

4. AI Literacy Program Implement mandatory training.

Short-term (Q2 2026)

-

5. Technical Documentation Prepare documentation for high-risk systems.

-

6. Data Governance Audit training data quality and representativeness.

-

7. Logging System Implement required automatic logging.

Medium-term (Q3-Q4 2026)

-

8. Human Oversight Define human intervention procedures.

-

9. Conformity Assessment Conduct self-assessment or plan third-party evaluation.

-

10. User Information Prepare information notices for system users.

Anticipating Evolutions: Staying Compliant Long-term

The Evolving Context

The AI Act is not set in stone. Several evolutions are expected:

2026-2027:

- Additional delegated acts

- National authority jurisprudence

- Sectoral codes of conduct

2027-2028:

- Planned AI Act revision

- Potential extensions or restrictions

- International harmonization (US, UK, Japan)

Sustainable Compliance Strategy

1. Continuous Regulatory Watch

- Follow European AI Office publications

- Subscribe to AI legal newsletters

- Participate in industry associations

2. Internal AI Governance

- Designate an AI compliance officer

- Create an AI committee including legal, technical, business

- Review your inventory quarterly

3. Extended "Privacy by Design" Approach

- Integrate AI Act compliance from design

- Continuous documentation, not retrospective

- Compliance tests in CI/CD pipelines

4. Relations with Authorities

- Identify your national supervisory authority

- Participate in regulatory sandboxes

- Anticipate audits

Penalties: What You Risk

Fine Amounts

| Violation | Maximum Fine |

|---|---|

| Prohibited practices | €35M or 7% global revenue |

| High-risk non-compliance | €15M or 3% global revenue |

| Inaccurate information to authorities | €7.5M or 1.5% global revenue |

Other Consequences

- Market placement prohibition

- Non-compliant system recall

- Sanction publication (name & shame)

- Reputational damage

Who Supervises?

- European AI Office: GPAIs and coordination

- National authorities: High and limited risk systems

- In France: Likely CNIL (to be confirmed by decree)

Our AI Act Compliance Support

At Ikasia, we offer:

AI Act Compliance Audit (3 days)

- Inventory and classification of your AI systems

- Gap analysis against obligations

- Prioritized compliance roadmap

"AI Act for Decision Makers" Training (1 day)

- Understanding obligations by risk level

- Identifying your responsibilities

- Anticipating regulatory evolutions

Compliance Support (3-12 months)

- Compliance project management

- Technical documentation

- Audit preparation

Conclusion

The AI Act 2026 enters its operational phase. After prohibited practices and AI literacy in February 2025, obligations for high-risk systems apply from August 2026.

The 3 priorities for 2026:

- Inventory all your AI systems and classify them

- Document compliance (technical, data, risks)

- Train your teams in AI literacy

The Digital Omnibus brought some simplifications for SMEs, but fundamental requirements remain. The winning approach? Anticipate rather than endure, by integrating compliance from the design of your AI projects.

The AI Act is not just a regulatory constraint: it's also the opportunity to build trustworthy AI that will be a lasting competitive advantage.

Enjoyed this article? Check out our AI Ethics & Governance Training — 2 days to drive AI strategy across your organisation.

Tags

Related courses

Related articles

Want to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.