OpenAI Safety Fellowship: What AI Safety Means in Practice for French Businesses

The announcement of the OpenAI Safety Fellowship marks a turning point in how major technology organizations approach the question of reliability and alignment of artificial intelligence systems. For French companies, often torn between the urgency of adopting AI and the need to do so responsibly, this signal from San Francisco deserves particular attention. Because behind this academic program lie very concrete implications for your operations, your teams, and your governance.

What is the OpenAI Safety Fellowship and why does it matter?

The OpenAI Safety Fellowship is a pilot program designed to fund and structure independent research on AI safety and alignment. The stated objective is twofold: to advance the state of scientific knowledge on risks associated with advanced language models, and to train the next generation of researchers specialized in this field.

In concrete terms, OpenAI commits to supporting external researchers — from universities, think tanks, or working independently — so they can work on critical questions: How can we ensure that an AI model does exactly what we ask it to? How do we detect and correct unexpected behaviors? How do we assess systemic risks at scale?

For French companies, this program sends a strong message: AI safety is no longer optional or a luxury reserved for research laboratories. It is a discipline undergoing rapid professionalization, with standards, methodologies, and soon certifications that will become mandatory in tenders, audits, and regulatory requirements — particularly within the framework of the European AI Act.

From fundamental research to enterprise use cases

One might be tempted to view this type of initiative as purely academic, with no direct connection to the daily operations of a Lyon-based SME or a Bordeaux-based mid-market company. This would be a strategic mistake.

Let's take a few concrete examples:

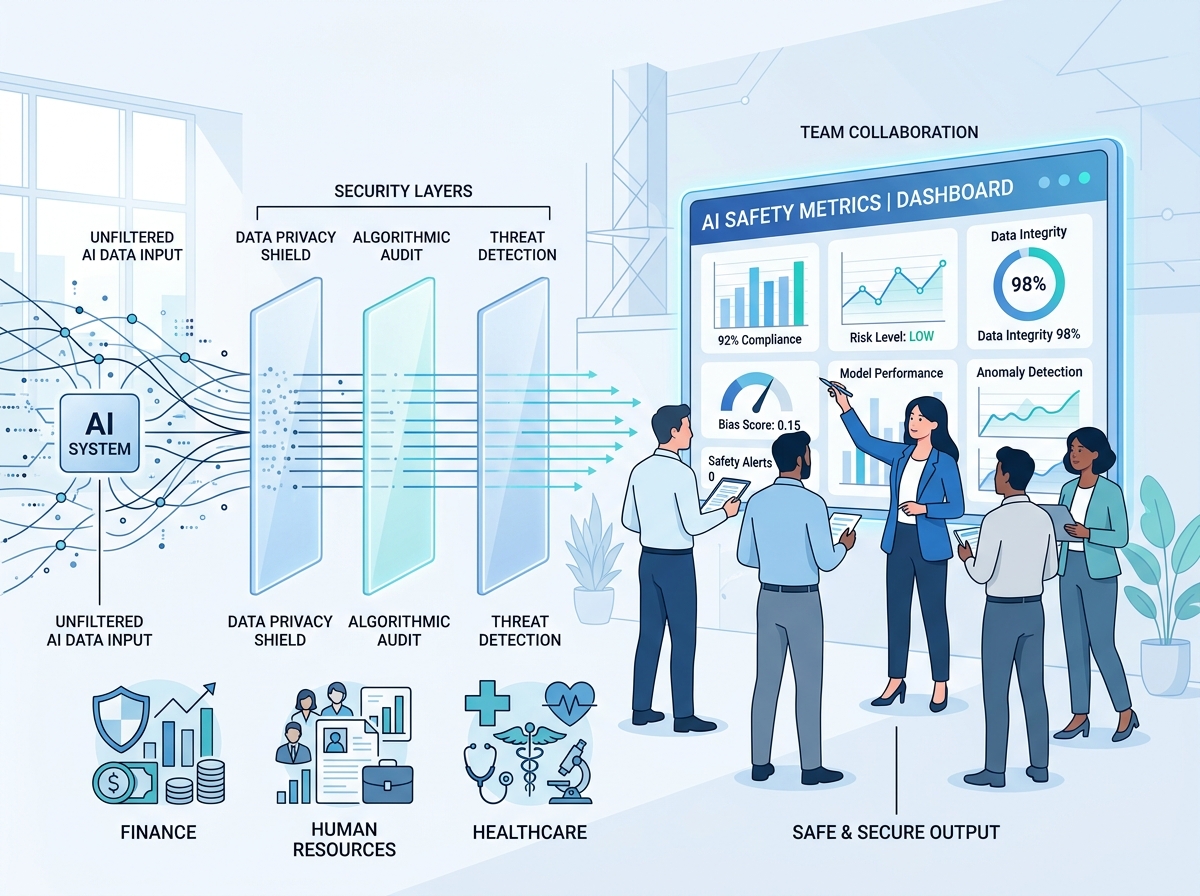

In an HR department, a company using AI to pre-screen applications must be able to demonstrate that the model does not reproduce discriminatory bias. The alignment work carried out through fellowships like OpenAI's directly contributes to improving audit and bias correction methods.

In a customer service department, a chatbot powered by an LLM can, under certain conditions, produce inaccurate or inappropriate responses. Safety research enables the development of technical guardrails that your service providers can more effectively integrate into their solutions.

In the financial or healthcare sectors, the requirements for traceability and explainability of algorithmic decisions are already very high. Advances in alignment provide the theoretical and practical foundations to meet these growing regulatory requirements.

In other words, every euro OpenAI invests in safety research ultimately translates into more robust tools, stronger evaluation frameworks, and more mature industry practices — which your teams can directly benefit from.

AI Governance: Moving from a reactive to a proactive stance

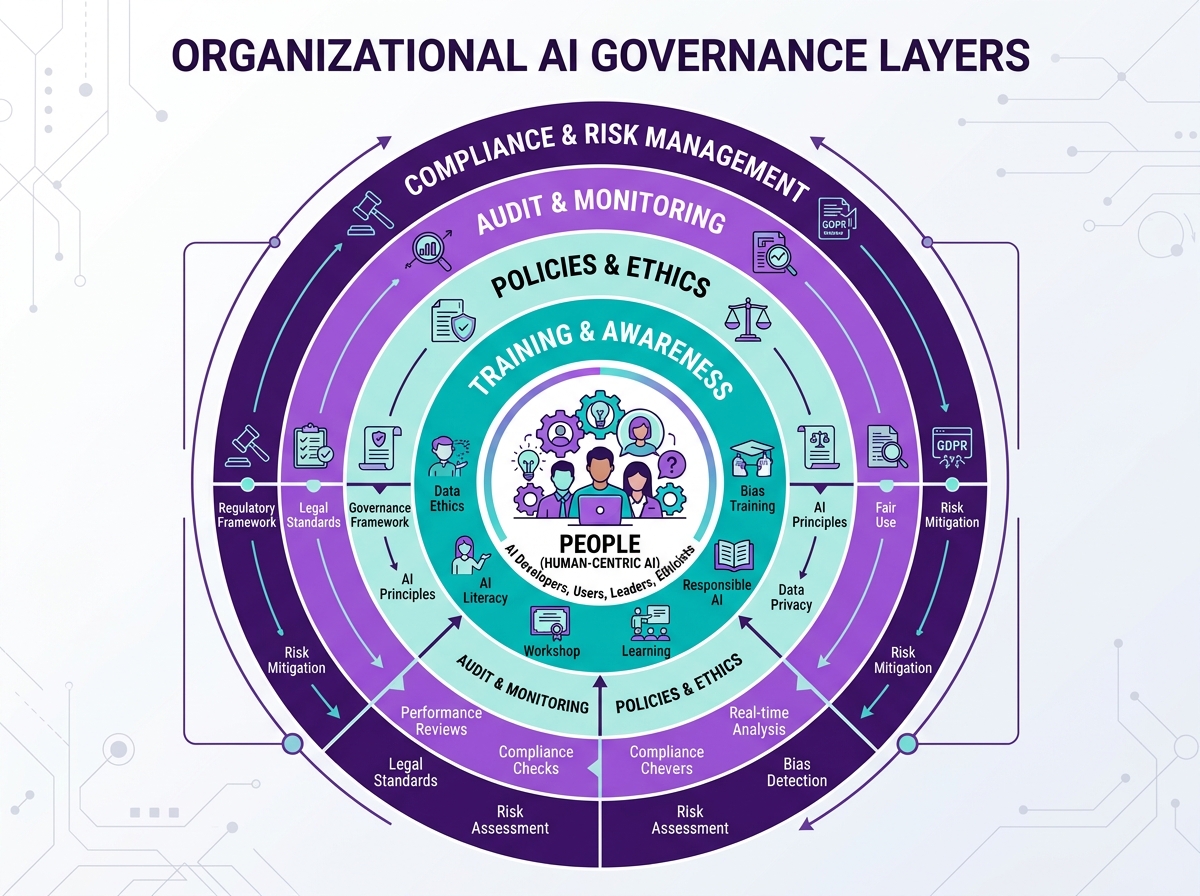

One of the major takeaways from this announcement is that it is no longer sufficient to wait for AI solution vendors to solve safety issues for you. The most advanced French companies in their digital transformation are beginning to integrate genuine internal AI governance: ethics committees, acceptable use policies, model validation processes before deployment.

This evolution is accelerating under the impetus of several simultaneous factors:

- The European AI Act, whose first obligations already apply to high-risk systems, imposes rigorous compliance assessments.

- Stakeholder expectations — customers, investors, partners — regarding algorithmic transparency are rising sharply.

- Public incidents related to poorly configured or poorly supervised AI have a well-documented reputational impact.

In this context, drawing inspiration from methodologies developed by initiatives like the Safety Fellowship — risk assessment, red teaming, adversarial testing — is no longer reserved for large CAC 40 companies. It is an accessible, progressive approach that any leader can initiate with the right resources and support.

Training your teams in an AI safety culture

The training dimension is perhaps the most structuring of all. OpenAI's Safety Fellowship has the explicit ambition to develop tomorrow's talent in safety and alignment. This intention should resonate directly with human resources departments and continuous learning managers in business.

Training your teams on AI safety is not just about training data scientists or engineers. It also means:

- Raising awareness among business managers about operational risks linked to uncontrolled use of generative AI tools.

- Equipping lawyers and DPOs to assess the regulatory implications of deployed AI systems.

- Giving IT teams the tools to audit and monitor models in production.

- Encouraging a culture of questioning where every employee feels legitimate in reporting abnormal behavior from an AI tool.

At Ikasia, we support French companies in this progressive upskilling. Our training programs combine the theoretical fundamentals of AI safety with practical scenarios adapted to your sector. Because the best way to benefit from advances like the Safety Fellowship is to have internal human resources capable of understanding and applying them.

The OpenAI Safety Fellowship announcement is not news reserved for computer science researchers. It is a signal of maturity from an ecosystem that is structuring itself, professionalizing, and gradually imposing new standards on all organizations using AI. French companies that anticipate this evolution today will gain a decisive advantage tomorrow.

Want to assess your organization's maturity regarding AI safety and governance issues? Contact our experts at ikasia.ai for a personalized assessment or to discover our training programs tailored to your teams.

Tags

Related courses

Related articles

OpenAI's 5 Principles for AGI: What Every Leader Must Understand to Prepare Their Business

Read

AI Agents in the Enterprise: How to Monitor Their Behavior and Prevent Silent Drift

Read

OpenAI FedRAMP Certified: How AI Security for the US Government Changes the Game for French Enterprises

ReadWant to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.