AI Reasoning Models in 2026: o3, Claude Opus, Gemini 2.5, DeepSeek R1 — The Complete Guide

Key takeaways: Reasoning models using chain-of-thought have become an AI industry standard within 18 months, with four competing approaches: OpenAI's separate o3 and o4-mini models leading on pure reasoning benchmarks like 87.5% on ARC-AGI, Anthropic's hybrid Claude Opus 4.6 with adaptive reasoning excelling on real code at approximately 72% on SWE-bench, Google's Gemini 2.5 Pro offering an unmatched 1-million-token context window, and DeepSeek R1 delivering competitive math performance at a fraction of the cost under MIT open-source license at 0.55 dollars per million input tokens. Thinking tokens can cost 10 to 50 times more than standard calls, making intelligent routing essential: use GPT-4o-mini for simple tasks, o4-mini for moderate reasoning, Claude Opus for code and analysis, and o3 for maximum precision. Recommended use cases include mathematics, financial modeling, complex debugging, legal analysis, and scientific research, while creative content, simple repetitive tasks, and real-time chatbots should use standard models. Ikasia offers workshops on mastering reasoning models and advanced LLM architecture training covering integration, design patterns, and reasoning chain auditability.

In 18 months, reasoning models have gone from a technical curiosity to an industry standard. Launched by OpenAI with o1 in September 2024, they are now offered by all major players: Anthropic, Google, DeepSeek, xAI. Their principle? Think step by step before answering, significantly reducing errors on complex tasks.

This guide reviews the state of the art as of February 2026.

What is a Reasoning Model?

A reasoning model is an LLM trained to break down complex problems into intermediate steps before providing a final answer. This is the "test-time compute" paradigm: instead of making the model bigger, you let it think longer.

Fundamental Difference from a Classic LLM

Classic LLM (GPT-4o):

Input: "What is 17 × 24?"

Output: "408" (direct answer, sometimes wrong)

Reasoning Model (o3):

Input: "What is 17 × 24?"

Thinking:

- I decompose: 17 × 24 = 17 × (20 + 4)

- 17 × 20 = 340

- 17 × 4 = 68

- 340 + 68 = 408

Output: "408"

The model explicitly generates its chain of thought (Chain-of-Thought) before concluding. Result: massive gains in math, code, logic, and complex analysis.

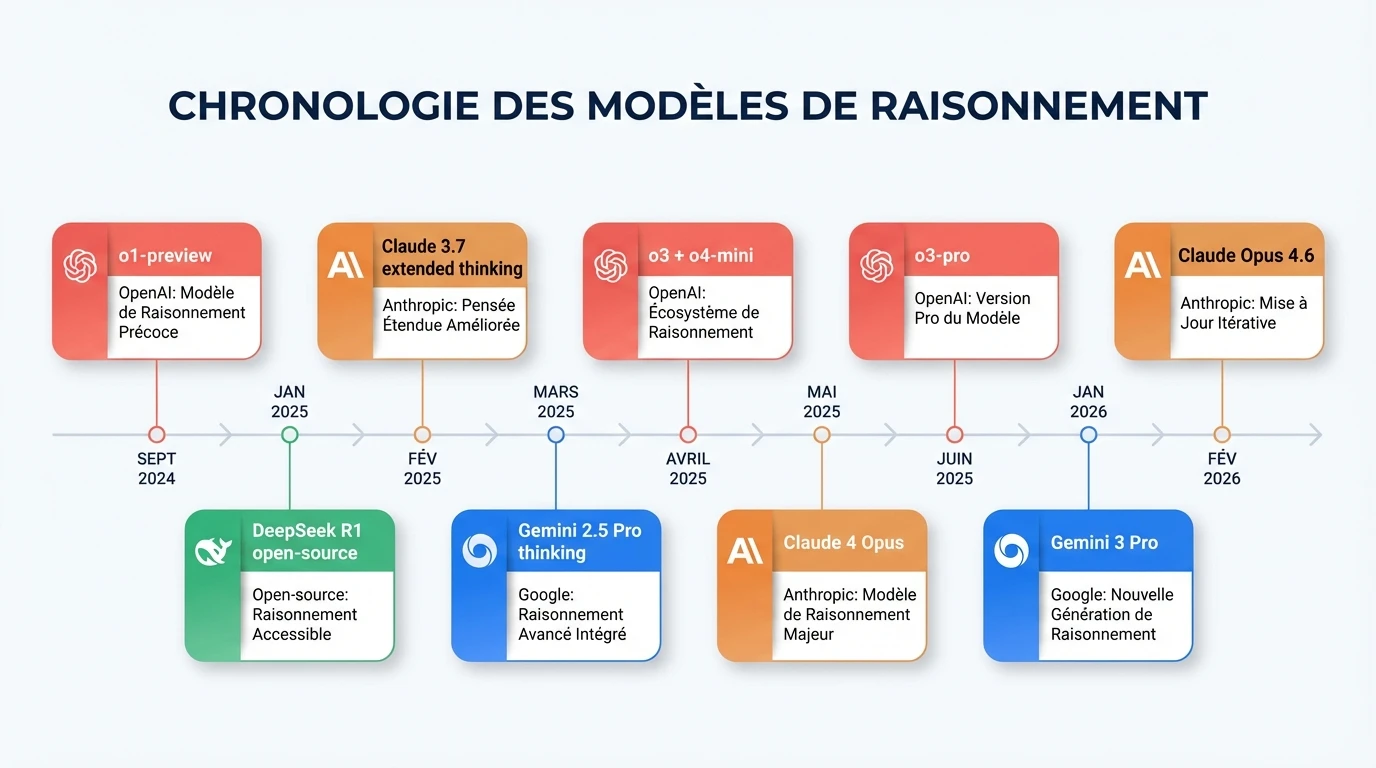

The Reasoning Race: Complete Timeline

Adoption has been lightning-fast — within 6 months, all major players followed OpenAI.

September 2024 — OpenAI Launches o1-preview

First commercial reasoning model. Limited version, but demonstrating potential: 83% on MATH (vs 60% for GPT-4).

January 2025 — DeepSeek R1 Shocks the Industry

The event of the year. DeepSeek publishes open-source (MIT license) a reasoning model competitive with o1. Immediate stock market impact: Nvidia loses ~$600 billion in market cap. DeepSeek proves that reasoning doesn't require massive proprietary infrastructure.

February 2025 — Anthropic Enters the Race

Claude 3.7 Sonnet introduces extended thinking: a single model can operate in standard mode OR deep reasoning mode. A "hybrid" approach different from OpenAI's.

March 2025 — Google Gemini 2.5 Pro

Google launches its best reasoning model with a context window of 1 million tokens — unmatched. "Thinking" is integrated directly into Gemini, activatable via toggle.

April 2025 — o3 and o4-mini, the New SOTA

OpenAI retakes the lead with o3 (87.5% on ARC-AGI, a general reasoning test) and o4-mini (excellent value for money). For the first time, reasoning models can use tools (web search, code, vision).

May 2025 — Claude 4 Opus

Anthropic responds with Claude 4 Opus, its most powerful model. SWE-bench Verified ~72%, competitive with o3.

June 2025 — o3-pro

"Pro" version of o3 with even more compute per request. 90%+ on GPQA Diamond. First reserved for ChatGPT Pro ($200/month), then extended to the API.

January 2026 — Gemini 3 Pro

Google moves to the next generation. 74.2% on Humanity's Last Exam, the most difficult benchmark of the moment.

February 2026 — Claude Opus 4.6

Anthropic launches Claude Opus 4.6 with adaptive reasoning: the model decides on its own when and how much to think. 1M token context in beta. Four effort levels (low, medium, high, max).

Four Approaches, Four Philosophies

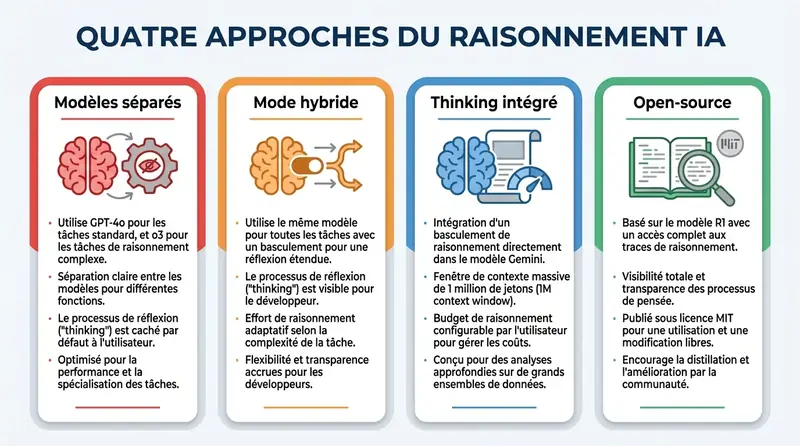

Each player has adopted a different strategy for integrating reasoning.

OpenAI: Separate Models

- GPT family for standard use (GPT-4o, GPT-5)

- o-series family for reasoning (o3, o4-mini, o3-pro)

- Thinking tokens hidden by default

reasoning_effortparameter (low/medium/high)

Anthropic: Hybrid Mode

- A single model (Claude Opus 4.6) can answer quickly OR think deeply

- Extended thinking activatable, with configurable token budget

- Thinking visible to the developer (auditability)

- Adaptive reasoning: the model decides dynamically (new in 4.6)

Google: Integrated Thinking

- Gemini 2.5 Pro/Flash with thinking on/off toggle

- Configurable thinking budget

- 1M token context — ideal for massive document analysis

- Deep Research for multi-step research

DeepSeek: Open-Source

- R1 published under MIT license, fully reproducible

- Reasoning trained via pure reinforcement learning (minimal SFT)

- Distilled models available (7B to 70B parameters)

- Reasoning chains completely visible

- Unbeatable API cost ($0.55/M input)

Comparative Benchmarks (February 2026)

| Benchmark | o3 | o3-pro | o4-mini | Claude Opus 4.6 | Gemini 2.5 Pro | DeepSeek R1 |

|---|---|---|---|---|---|---|

| AIME 2025 (math) | 96.7% | ~97% | 92.7% | ~85% | ~86% | 87.5% |

| GPQA Diamond (science) | 87.7% | 90.2% | 81% | ~83% | 84% | 71.5% |

| SWE-bench (real code) | 69.1% | ~70% | 68.1% | ~72% | 63.8% | ~49% |

| ARC-AGI (reasoning) | 87.5% | — | 68% | — | ~55% | — |

| MATH-500 | ~97% | ~98% | 96% | ~92% | 92% | 97.3% |

| HumanEval (code) | 92% | 93% | 93% | 93%+ | 90% | 90% |

Key Takeaways:

- o3 dominates on pure reasoning (ARC-AGI, AIME)

- Claude Opus excels on real code (SWE-bench) and long documents

- DeepSeek R1 competes on pure math at a fraction of the cost

- Gemini 2.5 Pro offers the best context (1M tokens) and good overall balance

The Cost of Reflection: Thinking Tokens

Each reasoning step consumes thinking tokens. These tokens are billed at output token prices and can represent 5 to 20 times more than the final response.

| Model | Input (/M tokens) | Output (/M tokens) | Context |

|---|---|---|---|

| GPT-4o | $2.50 | $10 | 128K |

| o3 | $10 | $40 | 200K |

| o4-mini | $1.10 | $4.40 | 200K |

| Claude Opus 4.6 | $15 | $75 | 200K (1M beta) |

| Gemini 2.5 Pro | $1.25 | $10 | 1M |

| DeepSeek R1 | $0.55 | $2.19 | 128K |

Key takeaway: A single o3 call in "high" mode can cost 10 to 50x more than a GPT-4o call for the same question. But o4-mini and DeepSeek R1 make reasoning accessible at prices close to standard models.

Use Cases: When to Use a Reasoning Model

Recommended

1. Mathematics and Financial Modeling Multi-step problems, statistical analyses, projections. o3 and DeepSeek R1 excel here.

2. Complex Code and Debugging Algorithm design, code review, debugging. Claude Opus and o3 are the best on SWE-bench.

3. Legal and Contractual Analysis Clause analysis, risk identification, jurisprudence-based reasoning.

4. Scientific Research Hypothesis formulation, experimental data analysis, literature review.

5. Planning and Strategy Project plans, multi-criteria analyses, what-if scenarios.

Not Recommended

- Creative content generation → GPT-4o or Claude Sonnet (faster, cheaper)

- Simple and repetitive tasks → Entity extraction, basic summaries

- Real-time applications → 10-30s latency is incompatible with conversational chatbots

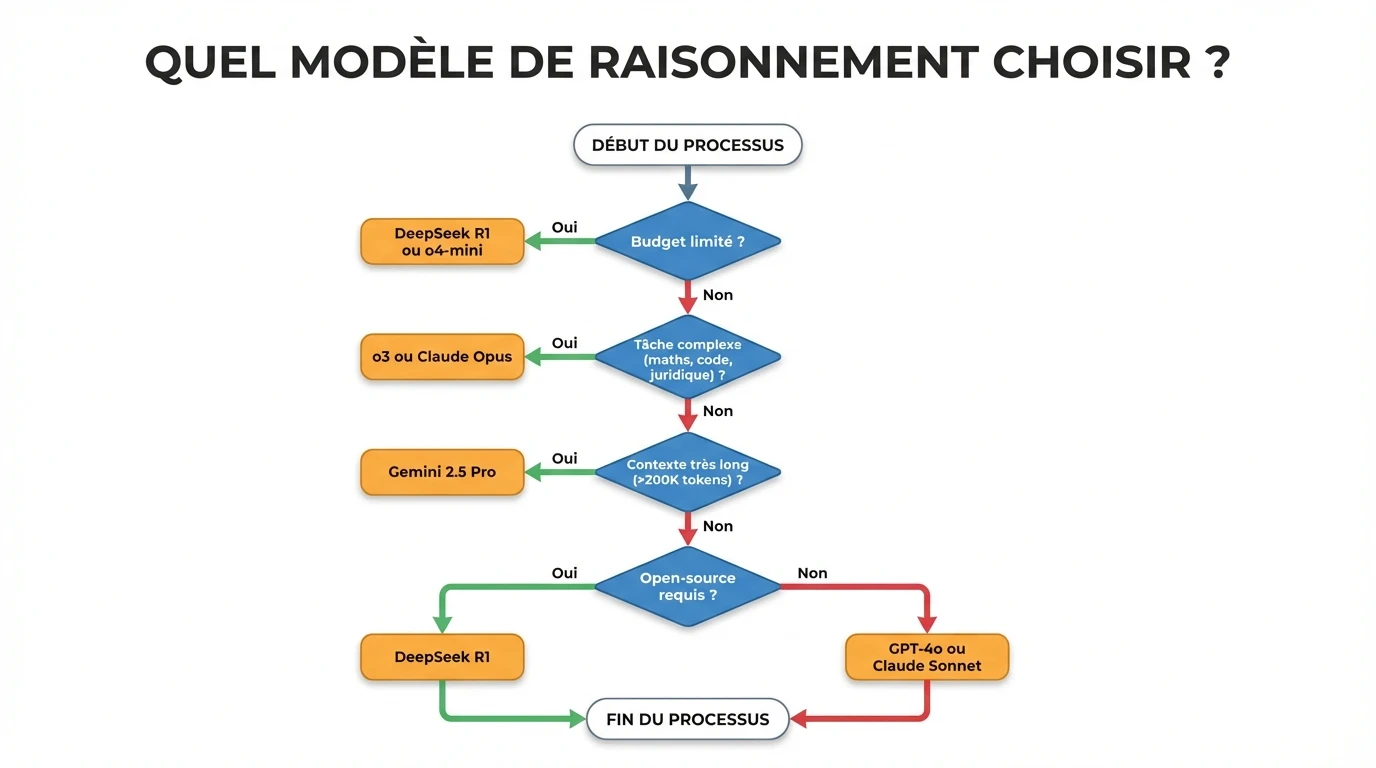

Which Model to Choose? Decision Guide

| Criteria | o3 | o4-mini | Claude Opus 4.6 | Gemini 2.5 Pro | DeepSeek R1 |

|---|---|---|---|---|---|

| Strength | Pure reasoning | Value for money | Real code, documents | 1M context, multimodal | Open-source, cost |

| Latency | 10-30s | 3-10s | 5-20s | 5-15s | 5-20s |

| Cost | $$$$$ | $$ | $$$$$ | $$$ | $ |

| Open-source | No | No | No | No | Yes |

| Best for | Math, logic | Daily reasoned use | Analysis, coding agent | Long documents | Tight budget, on-premise |

Recommended Architecture: Intelligent Router

To optimize cost and performance, implement a router:

def route_request(query, complexity_score, context_length):

if context_length > 200_000:

return "gemini-2.5-pro" # Only one handling 1M tokens

elif complexity_score < 0.3:

return "gpt-4o-mini" # Simple, fast, cheap

elif complexity_score < 0.6:

return "o4-mini" # Good reasoning, moderate cost

elif complexity_score < 0.8:

return "claude-opus-4-6" # Excellent on code and analysis

else:

return "o3" # Maximum reasoning

Limitations and Precautions

1. Reasoning ≠ Truth

The model can produce reasoning that is logically coherent but factually false. A convincing chain of thought is not a guarantee of veracity.

2. Significant Cost

A single o3 call in "high" mode remains 10 to 50x more expensive than a standard call. Reserve reasoning for cases that justify it.

3. Latency

10-30 seconds of reflection = incompatible with a conversational chatbot. Claude Opus 4.6's adaptive reasoning mitigates this problem by only thinking when necessary.

4. Variable Opacity

OpenAI hides thinking tokens by default. Anthropic and DeepSeek expose them. For applications requiring auditability (healthcare, legal), prefer models with visible reasoning.

How to Test Reasoning Models

OpenAI o3 / o4-mini

from openai import OpenAI

client = OpenAI()

response = client.chat.completions.create(

model="o3", # or "o4-mini"

messages=[{"role": "user", "content": "Solve this problem step by step..."}],

reasoning_effort="medium" # low, medium, high

)

Claude Opus with Extended Thinking

import anthropic

client = anthropic.Anthropic()

response = client.messages.create(

model="claude-opus-4-6-20250205",

max_tokens=16000,

thinking={

"type": "enabled",

"budget_tokens": 10000 # thinking budget

},

messages=[{"role": "user", "content": "Analyze this contract..."}]

)

DeepSeek R1 (OpenAI-compatible)

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

response = client.chat.completions.create(

model="deepseek-reasoner",

messages=[{"role": "user", "content": "Prove this theorem..."}]

)

# response.choices[0].message.reasoning_content contains the chain of thought

What This Changes for Businesses

"Reasoning Engineering" Replaces Prompt Engineering

Beyond classic prompting, you now need to:

- Calibrate the thinking level according to the task (low → max)

- Choose the right model for the right problem (routing)

- Validate the reasoning chain, not just the final answer

- Optimize costs with models like o4-mini for daily use

Most Impacted Professions

- Financial analysts: complex modeling with step-by-step justification

- Lawyers and legal professionals: contractual analysis with explicit legal reasoning

- Data Scientists: debugging and ML algorithm optimization

- Researchers: hypothesis formulation and data analysis

Our Training on Reasoning Models

At Ikasia, we offer:

"Mastering Reasoning Models" Workshop (3.5 hours)

- Understanding Chain-of-Thought and its variants (o3, Claude, Gemini, DeepSeek)

- Practical cases: math, code, legal analysis

- Cost/performance optimization with intelligent routing

"Advanced LLM Architecture" Training (2 days)

- Reasoning model API integration (OpenAI, Anthropic, Google, DeepSeek)

- Design patterns for reasoning applications

- Monitoring, observability, and reasoning chain auditability

See our training courses | Request a quote

Conclusion

In 18 months, reasoning has become an AI industry standard. It's no longer a competitive advantage of a single player — it's a fundamental capability that each provider implements in their own way.

The key for businesses? Orchestrate intelligently:

- o4-mini or DeepSeek R1 for daily low-cost reasoning

- o3 or Claude Opus for critical tasks requiring maximum precision

- Gemini 2.5 Pro for massive document analysis

- GPT-4o or Claude Sonnet for standard tasks not requiring reasoning

The future of AI is not a single model, but an intelligent orchestration of specialized models. And reasoning models are the cornerstone.

Enjoyed this article? Check out our AI Strategy Training for Leaders — 2 days to drive AI strategy across your organisation.

Tags

Related articles

Want to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.