EVA: The New Standard for Evaluating Your AI Voice Agents and Transforming Customer Relationships

AI-powered voice agents are progressively establishing themselves in customer service centers, HR departments, and support functions across French enterprises. Yet one fundamental question remains too often unanswered: how do you know if your voice agent is truly doing its job? This is precisely the challenge addressed by EVA (Evaluation of Voice Agents), a new framework proposed by ServiceNow AI researchers and published on Hugging Face. At a time when business leaders are investing heavily in these technologies, having a rigorous evaluation framework is no longer a luxury — it's a strategic necessity.

Why Evaluating a Voice Agent Is More Complex Than It Appears

Unlike a text-based chatbot, a voice agent operates in a radically more demanding environment. It must simultaneously manage understanding of spoken natural language, interruptions, silences, regional accents, background noise, and maintain fluid conversation that respects the implicit codes of human interaction. A voice agent that is "functional" in technical terms can nevertheless produce a disastrous customer experience if it responds too slowly, interrupts, or fails to handle the ambiguity of a request.

The EVA framework provides a structured response to this complexity by proposing multidimensional evaluation. It's no longer simply about measuring a resolution rate or satisfaction score at the end of a call, but about carefully analyzing each component of the interaction: speech recognition quality, relevance of generated responses, naturalness of turn-taking, error and misunderstanding handling, and coherence of conversational flow over extended exchanges.

For French companies deploying or considering voice agents — in sectors such as insurance, retail banking, e-commerce, or healthcare — EVA offers a common language between technical teams, business units, and external providers. This is precisely the type of shared reference framework that was missing to move from a promising pilot to a controlled industrial deployment.

EVA's Key Dimensions and Their Business Translation

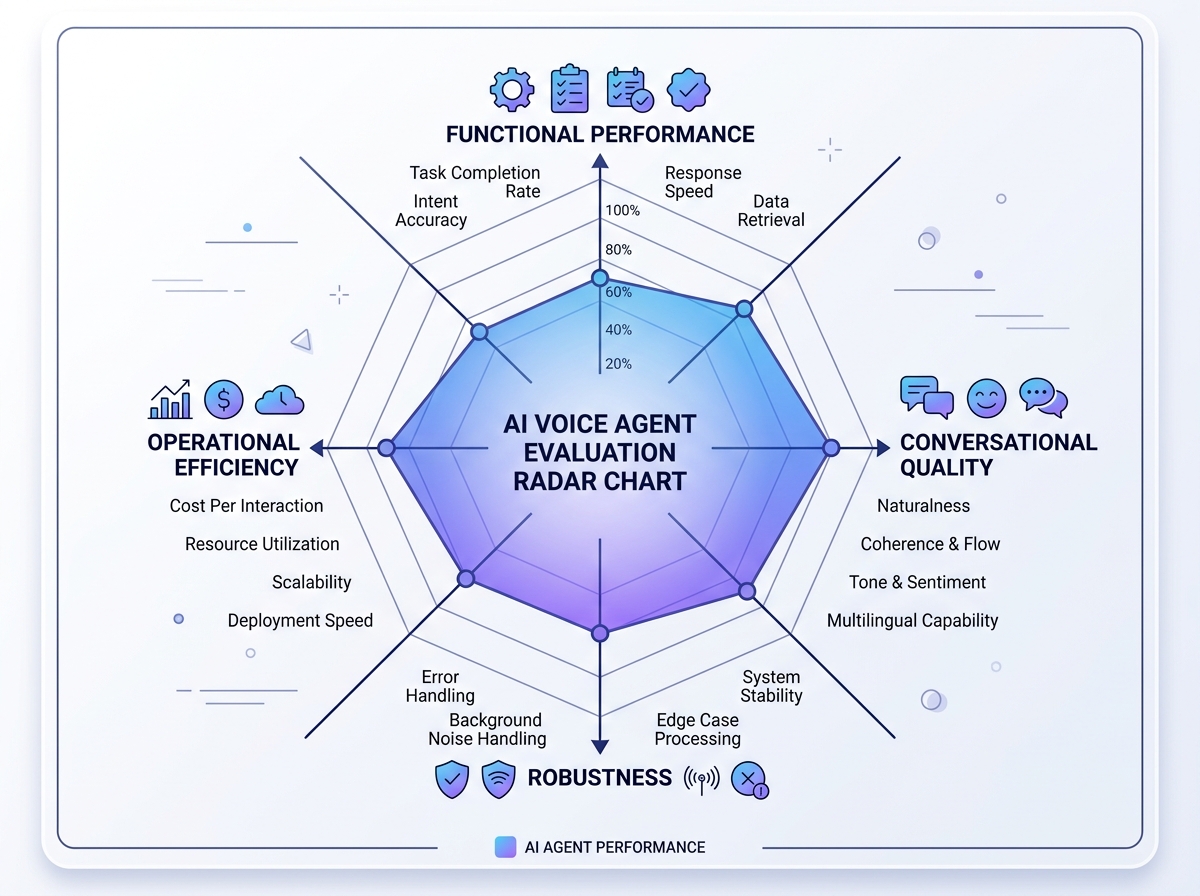

EVA structures evaluation around several complementary axes that each correspond to concrete business challenges.

Functional performance measures whether the agent actually accomplishes the requested task: appointment scheduling, claim qualification, password reset, routing to the right service. This is the most immediately understandable criterion for business leadership, and the one that conditions return on investment.

Conversational quality evaluates the fluency of the exchange: does the agent let the caller finish their sentences? Can it rephrase without sounding robotic? Does it handle ambiguous requests with elegance rather than in infinite loops? For a French customer calling their insurance company following water damage, these dimensions make all the difference between a reassuring experience and additional frustration.

Robustness tests the agent's behavior in degraded situations: background noise, poor phone connection, agitated caller, out-of-scope request. A voice agent deployed in real conditions will inevitably encounter such situations — EVA allows you to anticipate and quantify them before production rollout.

Operational efficiency, finally, integrates metrics such as average handling time, transfer rate to human agents, or cost per interaction. These indicators allow you to build a solid business case and drive continuous improvement over time.

For an industrial SME wishing to automate phone order taking, or for a large enterprise seeking to reduce its customer service bottlenecks, these four dimensions provide a comprehensive dashboard to pilot deployment with rigor.

Concrete Applications in French Enterprises

Let's consider several examples of direct application of the EVA framework in typically French contexts.

In the banking sector, an organization like a regional mutual bank can use EVA to compare its level-1 voice agent performance — the one handling questions about statements, card limits, or branch hours — according to different French dialectal variants spoken across its territory. The "robustness" dimension allows identifying sub-populations of users for whom the agent performs less well, and adapting the model accordingly.

In the healthcare sector, a medical appointment-booking platform can leverage EVA to ensure its voice agent doesn't create friction during moments of vulnerability. Conversational quality becomes here an ethical as well as operational concern: an anxious patient who feels misunderstood by an automated system may simply abandon their care journey.

In retail and e-commerce, seasonal activity peaks — sales, year-end holidays, French Days — subject voice agents to intense pressure. EVA allows conducting documented stress tests and defining alert thresholds that automatically trigger human reinforcement before customer experience degrades.

In HR services, voice agents now assist employees with leave requests, pay slips, or benefits information. Evaluating these agents with EVA ensures they comply with French regulatory requirements (GDPR, information delivery compliance) while maintaining quality employee experience.

Training Your Teams to Leverage This Evolution

Adopting a framework like EVA isn't a top-down mandate: it requires upskilling teams at multiple levels. Project managers and product owners must understand evaluation metrics to incorporate them into acceptance criteria and RFPs. Data and NLP teams must know how to instrument evaluation pipelines and interpret results to guide iterations. Customer service managers must be able to read an EVA dashboard to pilot performance the same way they track pickup rates or NPS.

This is precisely the type of transversal competency — at the intersection of business, data, and user experience — that French enterprises struggle to find internally. The good news is that a targeted two-to-three-day training program is sufficient to equip teams with the keys to understand these frameworks, define their own evaluation grids, and communicate effectively with their technology providers.

Voice AI is no longer a distant horizon: it's an operational management tool that deserves the same evaluation rigor as any other critical business process. EVA provides the framework. It's up to your teams to make it their own.

Want to assess the maturity of your voice AI strategy or train your teams on new evaluation standards? Ikasia experts guide French enterprises in understanding and implementing advances in AI — from initial training to strategic consulting. Contact us at ikasia.ai to build your AI roadmap together.

Tags

Related courses

Related articles

GPT-5.5 Instant: What OpenAI's New Model Concretely Changes for Your Teams and Processes

Read

DeepMind's AI Co-Clinician: What the Medical Revolution Means for Every French Company

Read

Google Translate Turns 20: What This Reveals About AI Maturity for French Businesses

ReadWant to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.