7 ML Tools 2026: Stack Guide for Data Teams | Free vs Paid

Key takeaways: The ML tools ecosystem has stabilized around dominant frameworks across four stack layers in 2026. For training, PyTorch is the de facto standard with torch.compile now stable, scikit-learn remains indispensable for 80% of enterprise ML classification and regression tasks, and XGBoost and LightGBM still outperform neural networks on structured tabular data. For LLMs, Hugging Face Transformers hosts over 500,000 models, LangGraph has become the standard for agentic workflows, and LlamaIndex specializes in RAG architectures. For MLOps, MLflow 3.x natively tracks LLMs and agents, Weights and Biases offers premium collaboration and visualization, and Ray Serve handles scalable model serving with autoscaling. For data engineering, Polars written in Rust is 5 to 50 times faster than Pandas, and dbt has standardized versioned SQL transformations. Recommended stacks range from minimalist (scikit-learn, MLflow, Hugging Face, dbt) for SMBs to advanced (PyTorch, LangGraph, W&B, Ray, Kubernetes, sovereign cloud) for enterprises. Ikasia trains teams on these frameworks using real project contexts rather than disconnected toy cases.

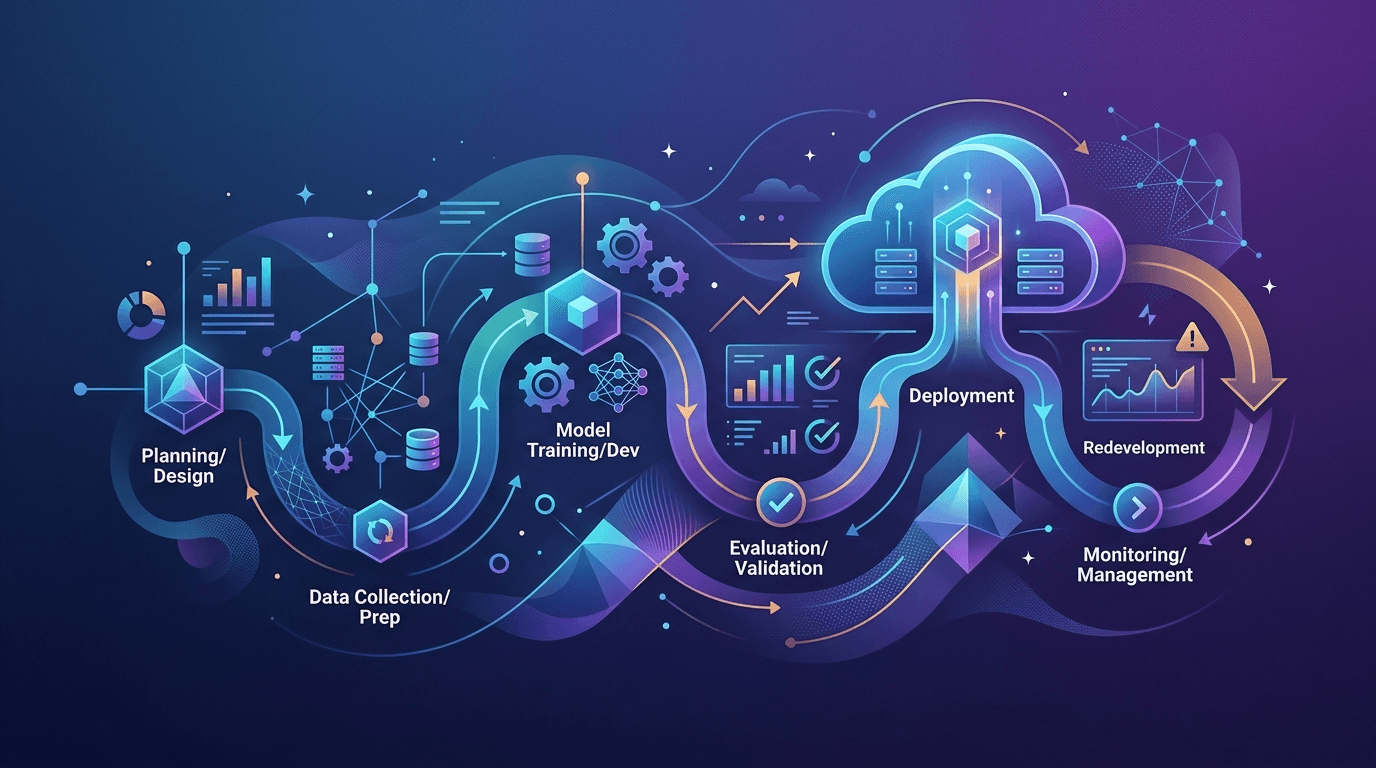

In three years, the machine learning tools ecosystem has undergone a radical transformation. The emergence of LLMs, the rise of MLOps, and the industrialization of AI pipelines have redrawn the map of essential frameworks. Here's our overview of the tools to master in 2026, organized by layer of the ML stack.

Layer 1: Training and Modeling

PyTorch — the de facto standard

PyTorch has definitively established itself as the reference framework for model development. The vast majority of papers published on arXiv now use PyTorch, far ahead of TensorFlow — the research community's choice is clear.

What's changed in 2026: torch.compile is now stable and offers significant performance gains without changing code. Native management of distributed models (FSDP, tensor parallelism) is accessible without deep expertise.

For whom? Any team training custom models, from LLM fine-tuning to computer vision.

scikit-learn — still indispensable

Don't underestimate it. For 80% of enterprise ML problems — classification, regression, clustering, feature selection — scikit-learn remains the most effective tool. Its readability, exemplary documentation, and integration with the entire Python ecosystem make it a must-have.

2025-2026 update: Better integration with modern pipelines (ONNX compatible, Polars in beta).

XGBoost / LightGBM — the kings of tabular data

On structured data (financial, CRM, e-commerce), these gradient boosting algorithms often outperform neural networks. In 2026, they remain the first choice for Kaggle competitions and production ML projects on business data.

Layer 2: LLMs and Generative AI

Hugging Face Transformers — the universal LLM library

Hugging Face has become the GitHub of AI models. Over 500,000 models available, standardized pipelines for inference, and an active community publishing in real-time.

Key use case: fine-tuning open-source LLMs (Mistral, Llama, Qwen) on your proprietary data, without sending your data to third-party APIs.

LangChain / LangGraph — agentic orchestration

LangChain remains the most widely used framework for building LLM applications. LangGraph, its extension for agentic workflows (multi-agents, reasoning loops), has become a de facto standard for complex AI applications.

Point of caution: LangChain has faced legitimate criticism for its complexity and sometimes opaque abstractions. LangGraph provides more control — prefer it for production projects.

LlamaIndex — the RAG specialist

For RAG architectures (Retrieval-Augmented Generation) — making your LLMs talk about your internal documents — LlamaIndex offers cleaner abstractions than LangChain on this specific challenge.

Layer 3: MLOps and Production Deployment

MLflow — model tracking and registry

MLflow has become the standard for ML experimentation. It allows automatic tracking of metrics, parameters, and artifacts from each training run — and manages a registry of versioned models.

In 2026: MLflow 3.x natively integrates tracking for LLMs and agents, not just traditional ML models.

Weights & Biases (W&B) — the premium competitor

For teams with more advanced needs (collaboration, rich visualizations, hyperparameter sweeps), W&B is the premium alternative. Its interface is superior to MLflow, but its cost can be a barrier.

Ray / Ray Serve — scalability and serving

For industrializing large-scale inference, Ray has established itself as the reference solution. Ray Serve handles model serving with load balancing, autoscaling, and native support for PyTorch models.

Layer 4: Data and Feature Engineering

Polars — the successor to Pandas

Written in Rust, Polars is 5 to 50 times faster than Pandas on common operations, with a modernized syntax and native lazy evaluation support. Migration from Pandas is simple and the performance gain is immediate on datasets over 1M rows.

dbt — data transformation in versioned SQL

dbt (data build tool) has revolutionized how data teams transform their data in warehouses. Versioning, tests, automatically generated documentation — it's become the standard for the transformation layer.

How to Build Your ML Stack in 2026

Minimalist stack (SMBs, first ML projects): scikit-learn + MLflow + Hugging Face Transformers + dbt

Intermediate stack (mid-sized companies, data team of 5-20 people): PyTorch + LangGraph + LlamaIndex + MLflow + Ray Serve + Polars + dbt

Advanced stack (large enterprises, custom LLM projects): PyTorch + Hugging Face + LangGraph + W&B + Ray + Kubernetes + sovereign data (on-premise or sovereign cloud)

Conclusion

The ML ecosystem has stabilized around a few dominant frameworks — but it's still evolving fast, especially on the LLMs and MLOps layers. The key: choose tools based on your actual use cases, not trends.

At Ikasia, we train your teams on these frameworks in the context of your real projects — not on toy cases disconnected from your business challenges.

Want to audit your current ML stack or build a tailored tooling roadmap? Contact us to discuss.

Tags

Related courses

Related articles

Want to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.