OpenAI FedRAMP Certified: How AI Security for the US Government Changes the Game for French Enterprises

The announcement went almost unnoticed in France, yet it sends a powerful signal to all organizations still hesitant about deploying AI in sensitive environments. OpenAI has just obtained FedRAMP Moderate certification for ChatGPT Enterprise and its API, officially opening the doors of US federal agencies to its solutions. Behind this American technical accreditation lie issues of governance, trust, and organizational transformation that resonate directly with the concerns of French enterprises—whether subject to GDPR, NIS2 requirements, or simply seeking a solid framework for their AI strategy.

FedRAMP Moderate: Understanding the Signal Behind the Certification

The FedRAMP program (Federal Risk and Authorization Management Program) is the cloud security framework mandated by the US government for any service processing federal data. Achieving the "Moderate" level means OpenAI has demonstrated compliance with over 300 security controls covering access management, encryption, data traceability, incident management, and operational resilience.

For French enterprises, the most relevant comparison is with the ANSSI's SecNumCloud framework or the requirements of the NIS2 directive transposed into French law. Although FedRAMP and SecNumCloud are not equivalent, the dynamic is identical: major AI vendors are now required to prove their security maturity to access public markets and regulated sectors.

This movement reflects a fundamental trend: generative AI is leaving the experimental phase to enter a phase of structured industrialization. Companies waiting for a hypothetical "perfectly secure AI" before acting risk being overtaken by those building their competencies and governance processes now.

Concrete Use Cases for Sensitive Sectors

FedRAMP certification opens very concrete doors on the American side: automated audit report writing, analysis of classified contractual data, decision-making assistance in restricted-access environments. For French enterprises in equivalent sectors, the parallel is immediate.

In the banking and insurance sector, compliance and risk teams can now consider AI tools for contract analysis, anomaly detection in financial flows, or generation of regulatory summaries—provided that selected solutions meet DORA requirements and ACPR recommendations. OpenAI's FedRAMP certification constitutes an additional argument for engaging dialogue with CIOs and Data Protection Officers.

In the defense and aerospace industry (Airbus, Thales, Safran…), the question is no longer "can we use AI?" but "how do we deploy it in a sovereign and auditable way?". Solutions like Azure OpenAI Service (already available in France region) combined with private deployment architectures enable companies to harness the power of OpenAI's models while maintaining data control.

In the healthcare sector, certification of AI solutions by demanding government authorities reassures medical leadership and data protection officers. Use cases like structuring medical reports, ICD-10 coding assistance, or scientific literature synthesis become more easily defensible in executive committee meetings.

For French local authorities and public establishments, this news is a wake-up call: if the US government is integrating generative AI into its sovereign processes, the question of AI in French public services cannot remain in limbo indefinitely. Anticipating, experimenting in controlled environments, and training public sector employees today means gaining a decisive advantage.

AI Governance: What French Enterprises Must Remember

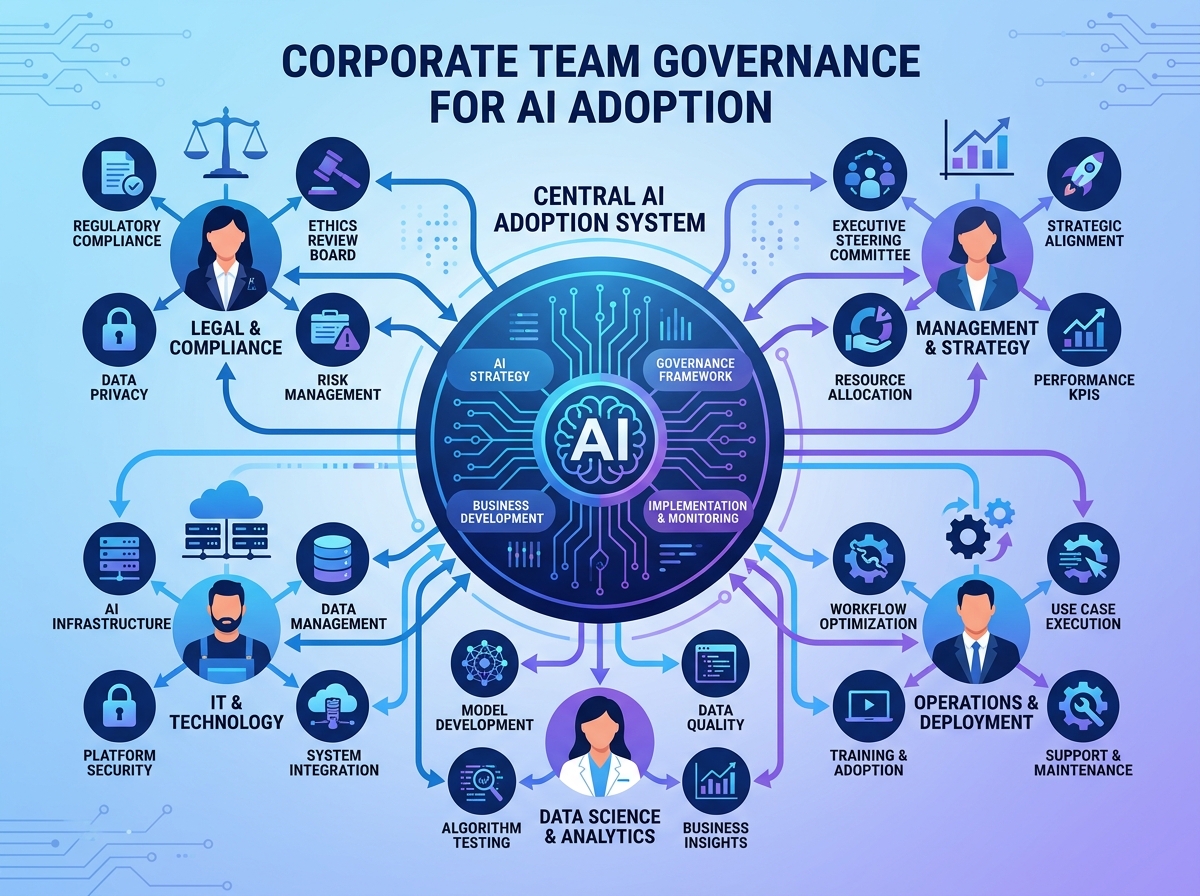

OpenAI's FedRAMP certification illustrates a reality we observe daily in our consulting missions: the question is no longer technical, it is organizational. The most advanced companies in AI adoption are not necessarily those with the best data scientists—they are those who have built a clear governance framework.

Concretely, this translates into four priority initiatives:

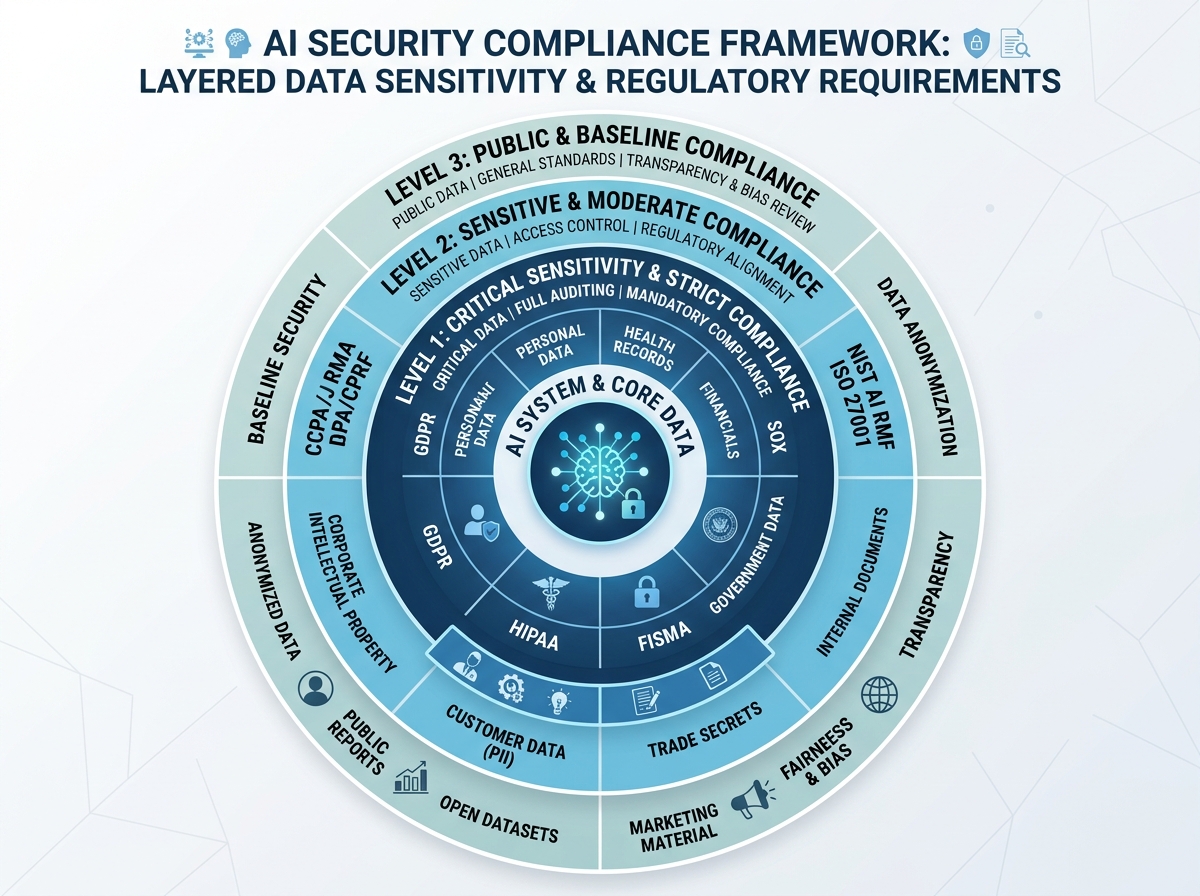

- Mapping AI use cases according to their data sensitivity level (public, internal, confidential, regulated)

- AI usage policy defining authorized tools, conditions of use, and responsibilities

- AI vendor evaluation process integrating compliance criteria (GDPR, NIS2, third-party certification)

- Human oversight mechanisms ensuring that critical decisions remain auditable and contestable

The European AI Act, whose first obligations apply progressively from 2025 onward, indeed imposes similar logic: classify AI systems by risk level, document choices, train users. Companies that anticipate this governance by drawing inspiration from existing frameworks—of which FedRAMP is an example—will be better equipped to meet upcoming regulatory audits.

Training Teams: The Invisible Challenge of AI Compliance

A certification like FedRAMP is only valuable if end users understand what it implies. This may be the most underestimated point in French companies' AI strategies: employee training is not a side benefit of AI transformation, it is its condition for success.

We regularly observe in our engagements that teams lack guidance on fundamentally important questions: What data can be submitted to an external AI model? How do you evaluate the reliability of a generated response? What legal framework applies to my sector? How do you document AI usage in an audit approach?

Skills development must cover three complementary dimensions:

- AI culture: understanding how large language models work, their strengths and limitations

- Security and compliance: mastering digital hygiene rules applied to AI (prompt injection, data leakage, algorithmic bias)

- Business usage: knowing how to design effective prompts, evaluate outputs, and integrate AI into existing workflows

These three dimensions address different audiences—executive leadership, IT teams, operational departments—and require adapted pedagogical formats: practical workshops, certified training, field change management support.

OpenAI's FedRAMP certification is far more than an American administrative accreditation. It is the marker of a turning point: generative AI is entering the era of institutional maturity, and French enterprises have every reason to draw inspiration from it to accelerate their own transformation trajectory—ambitiously, but structurally.

At Ikasia, we support French companies at every stage of this journey: from defining their AI strategy to training their teams, including auditing their governance and selecting solutions adapted to their regulatory constraints.

Ready to take the next step? Discover our training programs and consulting offerings at ikasia.ai and let's discuss your specific context.

Tags

Related courses

Related articles

GPT-5.5 Instant: What OpenAI's New Model Concretely Changes for Your Teams and Processes

Read

OpenAI Raises $122 Billion: What This Historic Turning Point Means for French Businesses

Read

GPT-5.4 mini and nano: what ultrafast AI models concretely change for your business

ReadWant to go further?

Ikasia offers AI training designed for professionals. From strategy to hands-on technical workshops.